Sudhanshu Agrawal

Research Engineer @ Qualcomm AI Research

Hi everyone! My name is Sudhanshu and I'm an ML Research Engineer at Qualcomm AI. I work on LLM efficiency research supervised by Mingu Lee. We work on inventing new speculative decoding algorithms for edge applications — making LLMs fast enough to run locally on your phone or laptop! I was previously at UCLA and graduated with a double major in Computer Science and Mathematics in 2023. While I was at UCLA, I was fortunate to conduct research with Professor Aditya Grover on generative modeling and with Professor Levon Nurbekyan and Professor Samy Wu Fung on mean-field games. In my free time, I like to surf, play the guitar, and sing. I also love watching movies, reading, and listening to music. Feel free to reach out if you'd like to chat!

Experience

ML Research Engineer

Qualcomm AI Research

LLM efficiency, speculative decoding, efficient architectures, diffusion LLMs.

2023 - Present

ML Engineering Intern

Qualcomm AI Research

Profiling tools for deep learning applications.

Summer 2022

ML Engineering Intern

SonicJobs

Synthetic computer vision dataset creation.

Summer 2021

ML and Data Science Intern

Reliance Jio

Hydrocarbon property prediction using classical ML.

Summer 2020

ML Intern

Julia Computing Inc

Contributions to the Flux Model Zoo library.

Summer 2019

Education

Bachelor of Science, Computer Science

University of California, Los Angeles (UCLA)

2019 - 2023

Magna cum laude

Bachelor of Science, Mathematics

University of California, Los Angeles (UCLA)

2019 - 2023

Cum laude

ISC 12th Grade

Mallya Aditi International School, Bengaluru

2017 - 2019

National Rank 4

arXiv Preprint

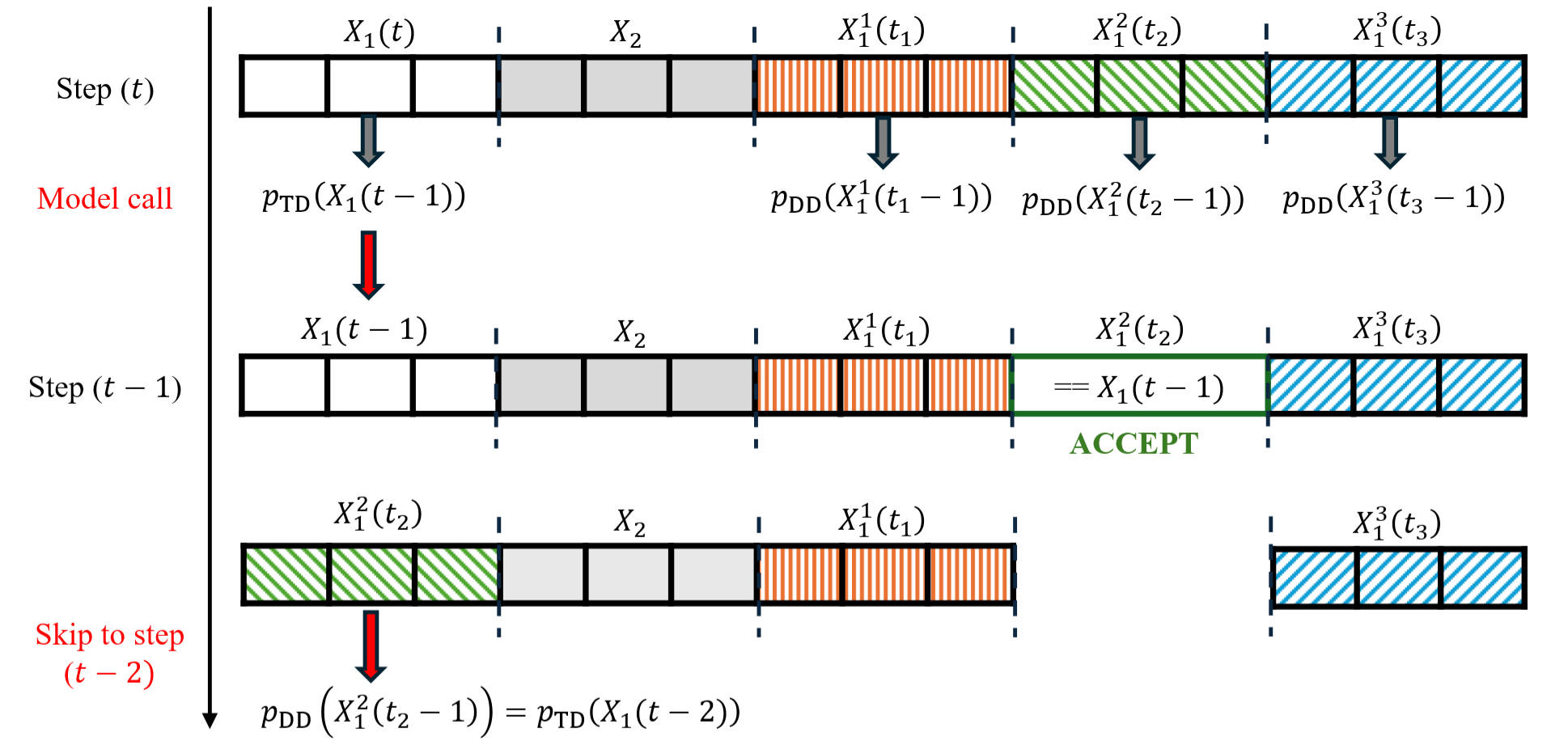

Spiffy: Multiplying Diffusion LLM Acceleration via Lossless Speculative Decoding

Novel speculative decoding algorithm to accelerate diffusion LLMs.

2025

ICML ES-FoMo Workshop, 2025

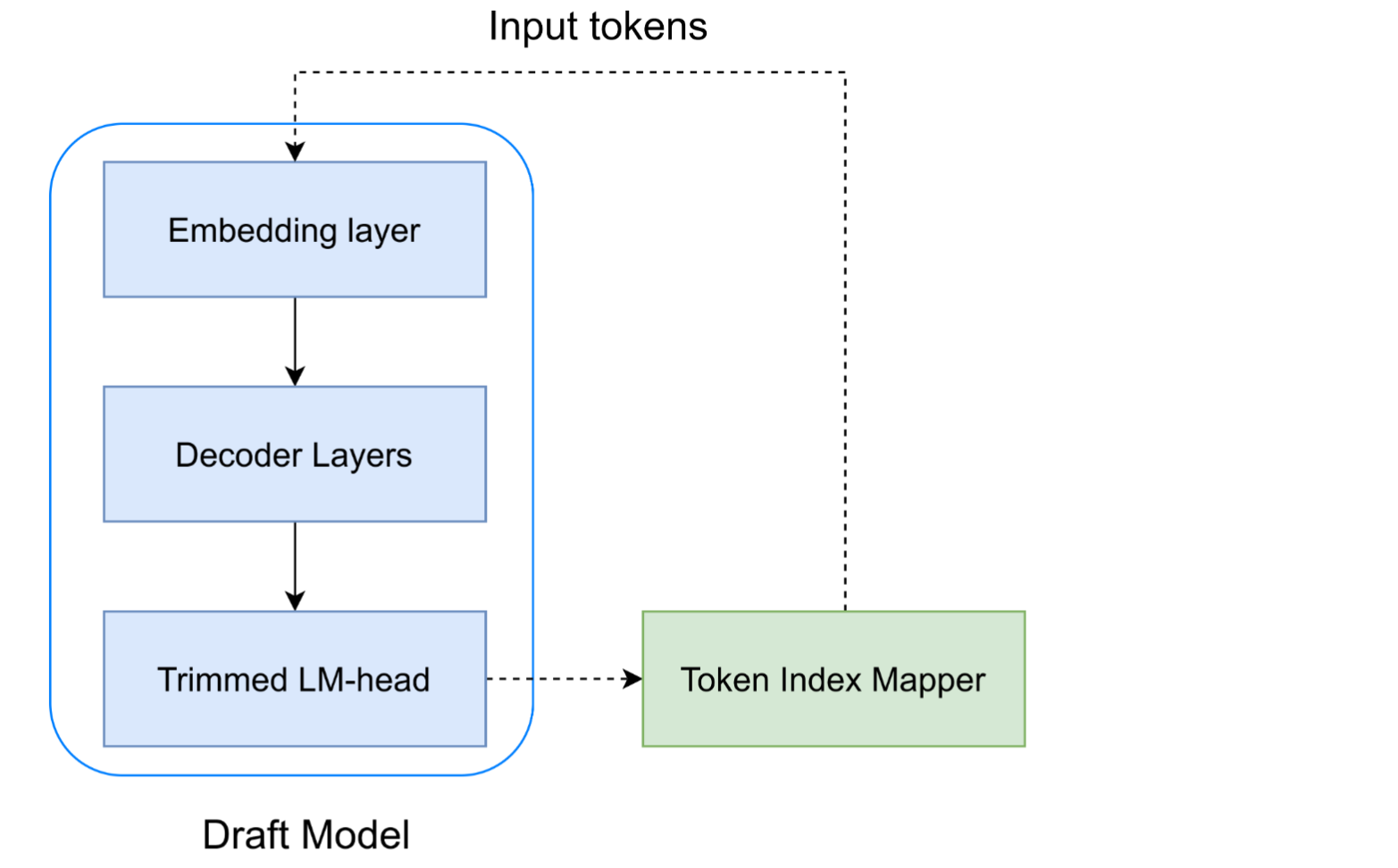

VOCABTRIM: Vocabulary Pruning for Efficient Speculative Decoding in LLMs.

Reducing the vocabulary size of the draft model to reduce memory-bandwidth overhead during speculative decoding.

2025

NeurIPS ENLSP-IV Workshop, 2024

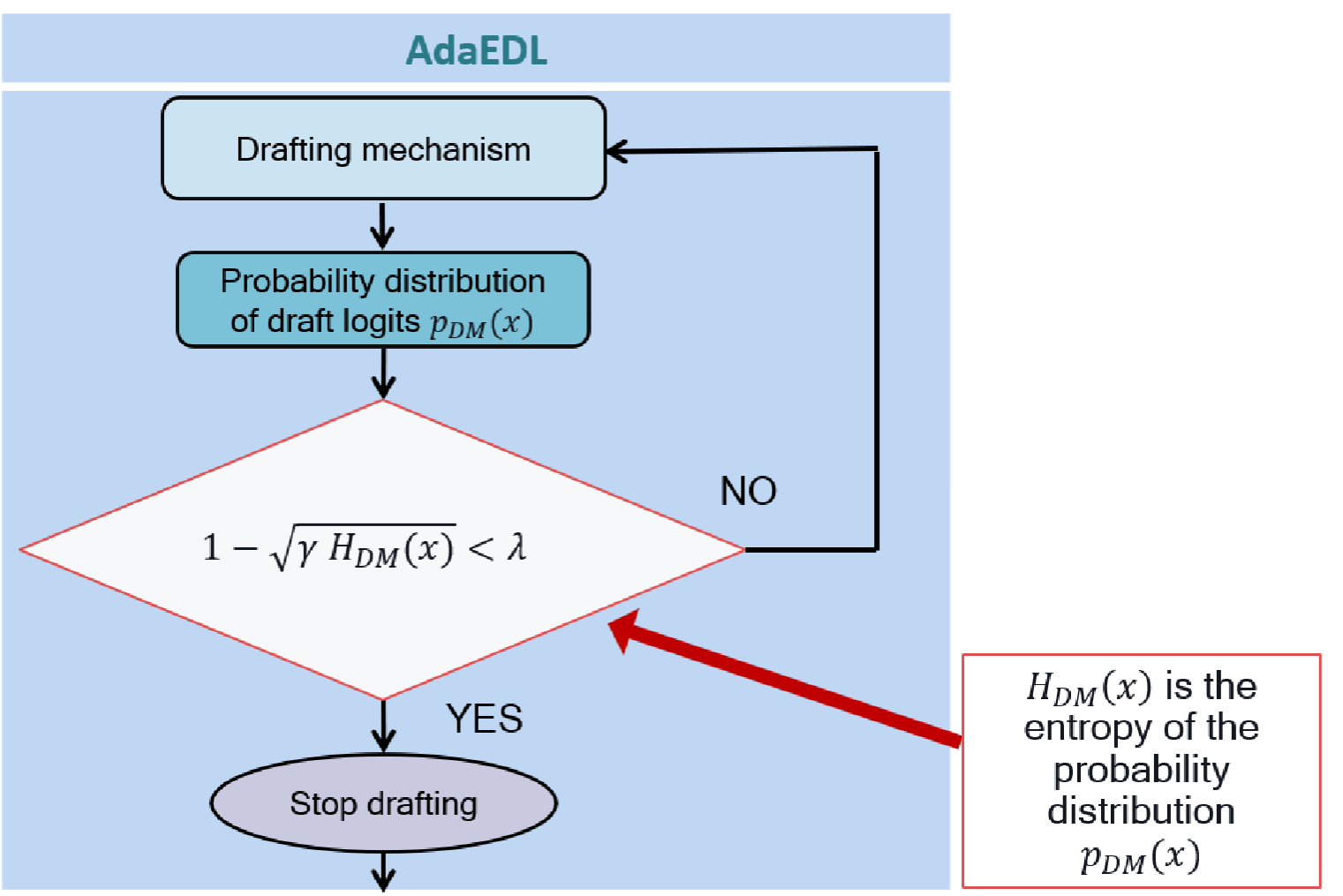

AdaEDL: Early Draft Stopping for Speculative Decoding of Large Language Models via an Entropy-based Lower Bound on Token Acceptance Probability

Early-draft-stopping using entropy for efficient speculative decoding.

2024

NeurIPS, 2023

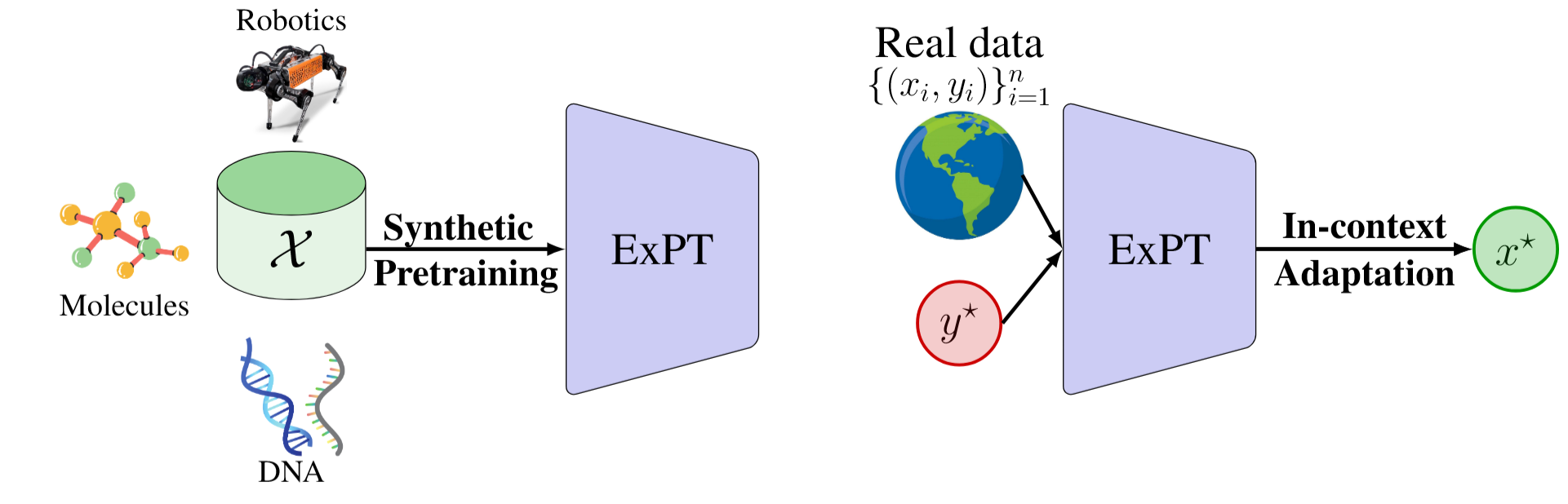

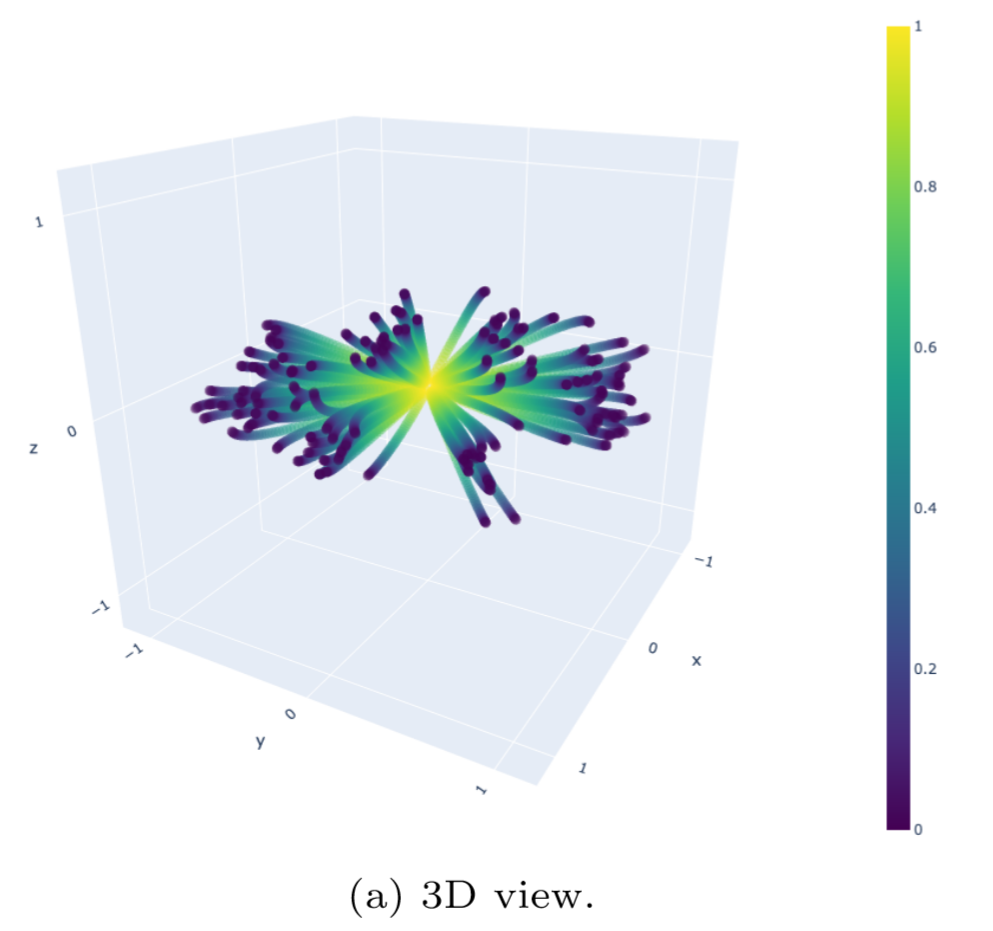

ExPT: Synthetic Pretraining for Few-Shot Experimental Design

Foundation model architecture for in-context adaptation to experimental design objectives.

2023

Journal of Computational Physics, 2022

Random Features for High-Dimensional Nonlocal Mean-Field Games

Using random-feature kernels to model mean-field interactions efficiently high-dimensional settings.

2022

Patents

The following patent applications were filed from 2024-2026 and hence, not all of them are published yet. They relate to 8 distinct inventions with multiple US and global pending patent applications.

- US 63/944,624 (lead inventor)

- US 63/938,500 (lead inventor)

- WO PCT/CN2025/134909

- WO PCT/CN2025/124672

- US 63/872,751

- US 63/849,613 (lead inventor)

- US 19/273,664 (lead inventor)

- WO PCT/US2025/037170 (lead inventor)

- US 19/086,578 (lead inventor)

- US 18/983,103 (lead inventor)

- US 63/688,654 (lead inventor)

Blog

Medium

Generative AI for Experimental Design

Using generative modeling to solve offline black-box optimization problems.

2024

FluxML.ai

Simulating The Motion of Charged Bodies

Simulating an N-body problem using gradient descent.

2023

Invited Talks | Judgeships | Reviewing

- Reviewer: 2026 AAAI Conference

- Judge: 2025 UCSD Graduate Student Research Exposition

- Judge: 2025 San Diego State University Student Research Symposium

- Reviewer: 2025 ICML Efficient Systems for Foundation Models Workshop

- Reviewer: 2025 NeurIPS Efficient Natural Language and Speech Processing Workshop

- Speaker: 2024 UCSD and Qualcomm Graduate Students Tech Talk and Recruitment Event

- Speaker: 2024 UCSD, IEEE, Qualcomm Careers Panel

- Speaker: 2024 UCLA Mathematics Department Alumni Panel